How DeepSeek Revolutionizes AI

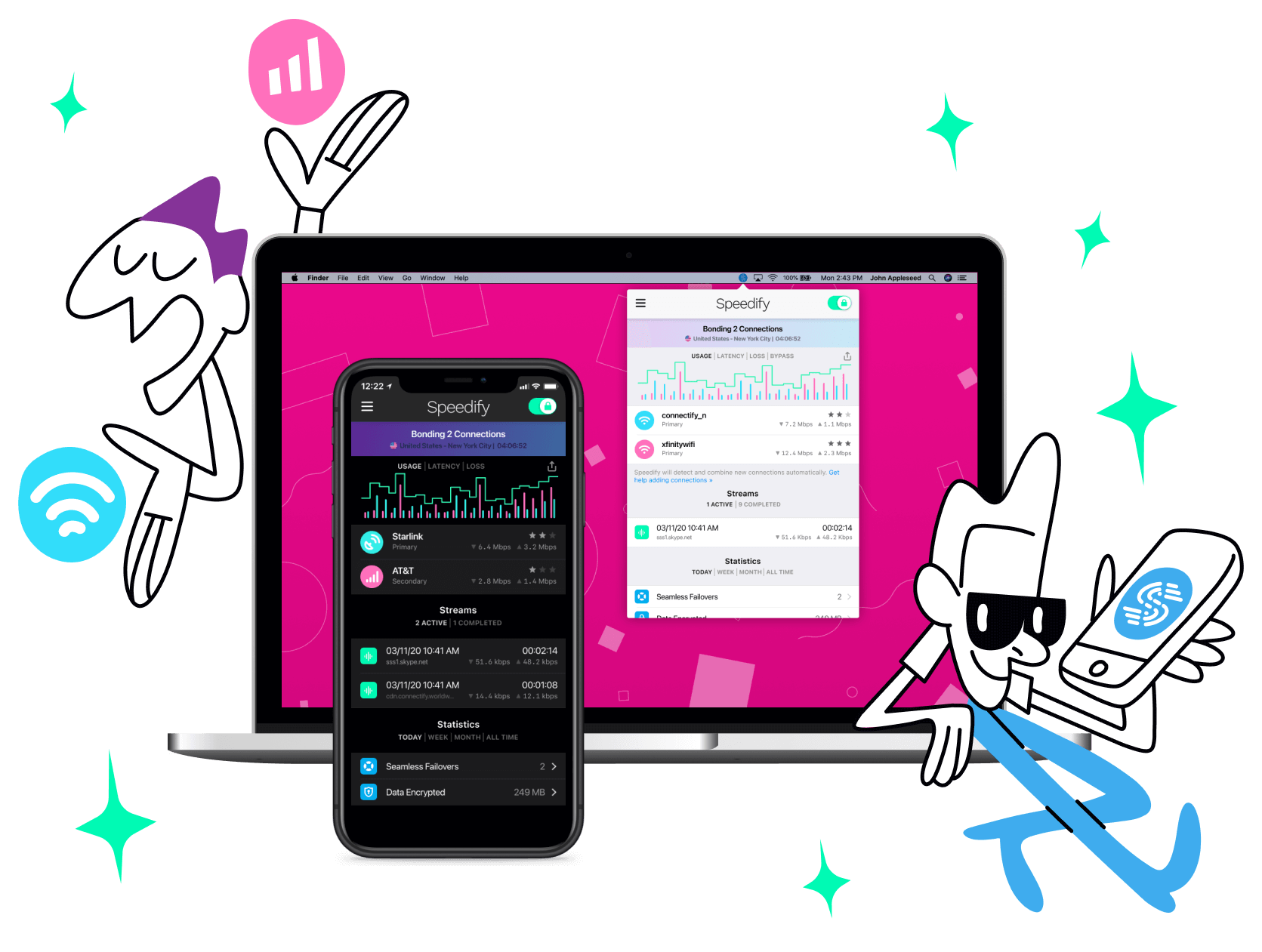

Use Speedify to Increase Your Upload and Download Speeds: Combine Wi-Fi, 4G / 5G Cellular, Ethernet, Starlink and Other Satellites

Speedify combines Wi-Fi, 4G / 5G cellular, Ethernet, Starlink, and other satellites for faster internet uploads and downloads

Speedify is the only software app that combines Wi-Fi, 4G / 5G cellular, Ethernet, Starlink and other satellites at once for secure, faster, and more reliable internet uploads and downloads so you stay online without interruptions.

Speedify will automatically detect and start using any available Internet connections on your device while intelligently distributing your online traffic between them for optimal performance. If you need help we have quick start guides available for most common set ups.

Speedify wirelessly joins multiple phone personal hotspots together for faster internet upload and download speeds

Speedify's Pair & Share feature enables you to connect to multiple hotspots at the same time for faster upload and download speeds and more reliable internet for everyone. Speedify's Pair & Share feature allows you to wirelessly share 4G / 5G cellular connections back and forth between multiple Speedify users on the same local network when live streaming from an event, calling from the commute or sharing from the field.

Speedify is the only app that allows you to share 4G / 5G cellular data between PCs, Macs, iPhones and Androids. Use multiple iPhones and Android phones as hotspots for internet access and get faster upload and download speeds and mobile failover for all paired devices.

See how you can use Speedify to combine different Internet connections:

Combine these connections on:

DeepSeek Got the Most Our of Old Nvidia GPU Chips

Ryan: DeepSeek R1 is a new Chinese open source AI many are calling a Sputnik moment. Its release has crashed stocks of American AI and chip companies like NVidia and Microsoft. But, it's actually powered by NVidia chips. Old ones. Do you think DeepSeek R1 is a Sputnik moment?

Alex Gizis: Yeah, actually, I think that's a fair comparison. So this isn't them getting there first. This is the Chinese getting just one step behind us on AI. I think we imagined we had this vast lead, and we don't.

Ryan: So how did DeepSeek manage to build such a powerful AI on NVidia old chips.

Alex Gizis: There's a whole bunch of tricks and the interesting thing is they published a paper and they published the code and other companies and groups are replicating what they did so we know it works.

So on the GPUs they didn't use CUDA for the whole thing. This is the C API to write code for the GPU. They wrote assembly language to do their own networking layer with smart caching to squeeze extra performance out of these chips. And it works at every level of stack.

At the higher level, one of the things that caught my eyes, they announced that, their new smart load balancing algorithm was part of it. I said, wait a minute. We do smart load balancing algorithms. But of course that wasn't for the network. They're load balancing the experts. In the modern AIs, back in ChatGPT 3.5, the whole AI was one big thing. You gave it a prompt, and it looked through everything it had ever learned to put together the best answer.

Starting with GPT 4, and of course the same thing with the latest DeepSeek R1, instead there's sort of a lot of different little AIs inside of it that were trained on different material. So if the person asks the AI a math question, doesn't have to go through what it learned reading the Jane Austen novels. You can just leave that section off, not scan through that memory.

As you go through the math, the science, it lets, various sections of the AI light up and work together to answer it. They came up with a smarter algorithm for dividing that work up. And they have different size experts, ones that know a lot, tiny ones that are really specialized on different bits of knowledge and it figures out which ones might help and just turns on those and ends up meaning it needs a lot less CPU to answer the question.

How DeepSeek Got in the Top in so Little Time

Ryan: So what's the difference between GPT 4's experts and DeepSeek R1's experts?

Alex Gizis: Well, as you know, OpenAI is no longer open. They started with this mission of putting out their code, but they've become very secretive. So there's this guess, people believe, and there's lots of evidence from the hints as you play with it, that there are 16 experts that make up ChatGPT. But they don't answer that. They're not answering that anymore.

In fact, they sort of put out the ChatGPT 4.01, this new smarter engine that does chain of thought and they sort of partly gave hints about how it worked and apparently DeepSeek took those hints and the paper basically said we think we figured out what ChatGPT is doing, we came up with an algorithm that follows those hints they gave but we filled in all the gaps and here it is and it works.

Ryan: So are they using a lot more experts a lot more than 16 or is that not known?

Alex Gizis: Yeah so the other crazy thing they did to save energy and time and CPU is they trained it on other AIs.

And this apparently is what OpenAI has been doing. When they came out with GPT 4 and a little while later they came out with GPT 4 Turbo. ChatGPT 4 Turbo was another AI that had been trained on Chat GPT 4. And so it ended up much smaller and compact, because it pulled out everything important that ChatGPT 4 had learned.

There was lots of unimportant stuff that it was able to leave out, so it came out with a smaller, more compact version. So they've been doing this already. But yeah, DeepSeek and probably everyone doing AI stuff now, why wouldn't you go check the open source ones and what lessons does it know that you don't know?

Pull them in. Go to ChatGPT. Ask it some questions you don't know what the right answer is. They're doing that so it saves time and energy if somebody's already trained in AI, pulled out. This means that leads on having the best model in the marketplace are much smaller than you would think. ChatGPT 4.01 was the greatest model in the world.

How long did it have? It had about three months and now DeepSeek is right there with it. So those sort of advantages seem much smaller.

AI Market Evolution

Ryan: I've seen a lot of people saying that DeepSeek is ahead of GPT or ahead of OpenAI.

Alex Gizis: I think as far as its ability to deliver results, it's just one step behind. I think as far as its ability to deliver results per watt of power it uses, it's nine steps ahead of OpenAI.

So it depends on how you look. Giant revolution in how much smarts they're able to get out of each GPU. But the end result, it's not smarter.

Ryan: So what does that mean for the AI market?

Alex Gizis: I don't think we're going to have a giant monopoly in AI software. I don't think OpenAI is going to be the company that everyone's buying AI from.

I think that it gets easier and easier to train models. I think more and more people will train models. Lots and lots of companies will have their AI. I think departments are going to have their AI, right? I think this is going to get democratized, not quite as much as the web was, where, you could build your own website on your laptop and people did.

I did, but I think lots and lots of, I think even small companies will be able to have their own AIs without having to pay the mighty OpenAI to run it for them. I think it's going to take off.

How Load Balancing Got DeepSeek so Far so Quick

Ryan: So could DeepSeek's advanced load balancing be applied to network load balancing?

Alex Gizis: At some level, there's a similarity having some brains of spreading out jobs to different things.

I think network load balancing is pretty different. Although today, pretty dumb. We've been here working on smarter load balancing algorithms actually for the new version of Speedify. I think there's a lot more brains you can have to, as you start giving load to an internet connection, see it slows down, load balance the others.

A lot of load balancing just kind of round robin going around giving traffic to each one. I think we can do much better. So there's this vague similarity that, everybody's doing smarter load balancing algorithms, but they're not going to be the same algorithm.

Ryan: But hypothetically if they had the newest NVidia chips...

Alex Gizis: So interestingly a lot of the stuff they did Was specifically to work around the weaknesses in the old chips.

So the new chips don't have those weaknesses. Although I got to think that if you apply that same sort of thinking, what can we do to squeeze more performance, I bet they'll find more stuff in the new chips where they can squeeze out more performance. But a lot of these increases they did, a lot of the improvements where they wrote assembly language to improve the networking, I think the networking is actually better on the new GPUs, so a little different, few things have to be redone, but I guess I have faith that team would.

You give them new chips and tell them to squeeze as much as they can out. I bet they find stuff.

Ryan: Because they're also working with older InfiniBand, right?

Alex Gizis: Yes. So they're using older GPUs and they're, we believe, stuck at 100 gigabit InfiniBand networking cards to combine all these GPUs together, which 100 gigabit sounds pretty fast.

No, they're maxing it out. So they wrote a lot of smart networking code. And this actually is networking code for the GPUs talk to each other efficiently with a little cache there. To keep answers they got from other servers to get better performance. Smart stuff that if they need something from another GPU they go on and work on other things until the answer comes back so it never gets stuck waiting on the network.

They did lots and lots of stuff to squeeze the performance around the network as well. Sorry, I said their load balancing wasn't networking stuff. They did do networking stuff in this thing. Really try squeeze it performance. That seems like they pulled it off.

NVidia and Other AI Companies Stocks Crash Explained

Ryan: So the DeepSeek R1 release crashed the stocks for NVidia and other AI companies like Microsoft. Why?

Alex Gizis: You know, the NVIDIA one's funny because to me, I thought this is good news for NVidia. I don't know if you know this, but the Biden administration put in all these export restrictions to stop the fancy N800 chips, to stop the super high speed InfiniBand networking cards from being sold to China because they thought it would help them catch up with OpenAI on the software front.

But instead these guys have taken the old chips, the ones that are too slow and they weren't worried about it's not covered by the export restrictions, and they managed to squeeze so much performance out of them that they're caught up. That they're building AIs on these old chips that are as powerful as the ones OpenAI is building on the various chips.

The other thing is that on average, 100% of the revenue that AI companies bring in is going to pay for their NVidia chips. 100%. There isn't just no profit. They can't even pay their people except for investor money. They're spending all their money and they're pulling in more money and they're spending that.

It's all money losing. For these AI companies to start making money, the NVidia chips have to either be much cheaper or be able to do a lot more. Because really, if it's going to be a sustainable business, they can't spend more than, say, 20% of their revenue on chips. Because they have to pay salaries.

They have to pay electricity. They have to pay for offices. All that stuff. That means all of NVidia's revenue today is not sustainable. If the investors start pouring in more money, NVidia's revenue is going to go away. They need something to happen to make it so you can squeeze a lot more performance out of their chips.

So that their customers can actually make a profit. And I think DeepSeek's new performance increases on the old chips in this open source code they just put out shows a path to a sustainable business for NVidia. I don't think their stock should have crashed.

Connectivity Tech Discussions

Our Connectivity Tech Discussions Between Two Palms video series shines the spotlight on Alex and technical guests, diving deep into caonversations about the latest Internet technology, including Starlink satellite, WiFi 7, Apple, fiber optics, new routers, remote connectivity, and networking protocols.

Join us and let's talk tech!